Digital systems over the cloud, IoT improves business KPI efficiency, customer experience, investor ROI, productivity, and cost efficiency, it also significantly amplifies the cyber threat space for cybersecurity professionals to cope with.

Digital systems over the cloud, IoT improves business KPI efficiency, customer experience, investor ROI, productivity, and cost efficiency, it also significantly amplifies the cyber threat space for cybersecurity professionals to cope with.

Image: Shutterstock

Critical infrastructure serves as the backbone supporting the essential daily services of (to name a few) energy, healthcare, finance, transportation, and communications that keep civilisation moving. These services are so critical that their disruption would have a debilitating effect on the national economy, security, public health and safety, or any combination thereof. For example, much of the $25 trillion-plus US economy relies on critical infrastructure services. According to views reported by economists to the Council of Foreign Relations (CFR), delays caused by traffic congestion alone cost the US economy over $90 billion a year in the last five years, whereas flight delays resulted in the US economy incurring losses of over $35 billion a year for the last five years.

Much of modern critical infrastructure in developed and developing nations is driven by digital systems over the cloud, IoT, operational technology (OT), the Internet, and recently by the AI revolution. While this improves business KPI efficiency, customer experience, investor ROI, productivity, and cost efficiency, it also significantly amplifies the cyber threat space for cybersecurity professionals to cope with. This challenge of thwarting the winds of unknown threats across every IT/OT domain before adversaries compromise critical infrastructure via the standard cyber kill chain (CKC) is similar to untying the Gordon knot. In other words, it has been proven (via researchers at MIT CAMS) that, let alone humans, even the world’s most powerful computers working together might not be able to untie this knot. This is precisely the reason the world has seen (and will continue to see) cyber-attacks on critical infrastructure such as Colonial Pipeline, SolarWinds, Log4j, Capital One Data Breach, Kaseya, EKANS, NotPetya, and the WannaCry attacks. Add to this an entire family of reportedly non-malicious IT/OT/cloud misconfiguration issues that can hamper societal ecosystems – an example of such incidents being the recent CrowdStrike cyber outage of 2024.

Hackers and cybercriminals mostly use sophisticated and stealthy attack mechanisms called Advanced Persistent Threats (APTs) to

(i) initially gain unauthorised access and foothold into critical infrastructure (CI) systems of an enterprise (e.g., via phishing or open RDP ports);

(ii) subsequently explore the threat space stealthily and persistently via command and control malware spread mechanisms and

(iii) later (after multiple weeks or months), launch the attack vector (e.g., ransomware) that debilitates the critical resources (‘crown jewels’) of the CI that, eventually leads to business disruption and adverse societal impact.

Many of the abovementioned famous cyber-attacks are seeded by APTs. In more technical jargon, (AI-powered) APTs successfully exploit each step of the standard seven-step cyber kill chain (CKC) (introduced by Lockheed Martin), starting from ‘reconnaissance’ right up to ‘targeted actions’ over months and sometimes years. Industry inputs from over 1800 security leaders and practitioners surveyed/collected by researchers at MIT CAMS in 2024 indicate that more than 60 percent of respondents believe that the detection and protection of and from APTs in critical infrastructure is getting harder by the day with the AI revolution. Additionally, nearly 90 percent of the respondents believe that AI-powered security solutions are a must-have to proactively boost the cybersecurity and resilience of critical infrastructure.

How Can AI Benefit Critical Infrastructure Security?

The use of AI has three core benefits for cyber risk management and security in critical infrastructure.

(i) AI can automate repeatable tasks, thereby hedging cyber risks arising from inevitable human factors such as the lack of focus of CI management personnel to identify important indicators of cyber-compromise. Furthermore, AI will help CI management precisely identify the root cause of a cyber-attack from several compromise indicator features – something that is infeasible for humans to routinely identify accurately and that too in the ever-increasing threat landscape.

(ii) AI has the power to dig out at speed and in real-time ‘complex’ statistical relationships not only between compromise indicator variables (for both internal and external enterprise cyber-threats) but also between incidents (that might have occurred far in the past) that might look un-related to the gut-feeling driven human eye, and

(iii) With the advent of generative AI, the adaptive ability of an AI suite of solutions to effectively parse a very large space of structured, unstructured, and noisy threat-related data to output crisp and concise cyber risk information needed for CI security operation centre (SOC) personnel to improve performance on all five pillars (i.e., identify, detect, protect, prevent, and recover) of the NIST Cybersecurity Framework.

Managerial Action Items Leveraging AI Use for Cyber Risk Management

In this article, we showcase and discuss FOUR action items for managers on the most effective ways of using AI as a defence tool to improve APT cyber-risk management in critical infrastructure.

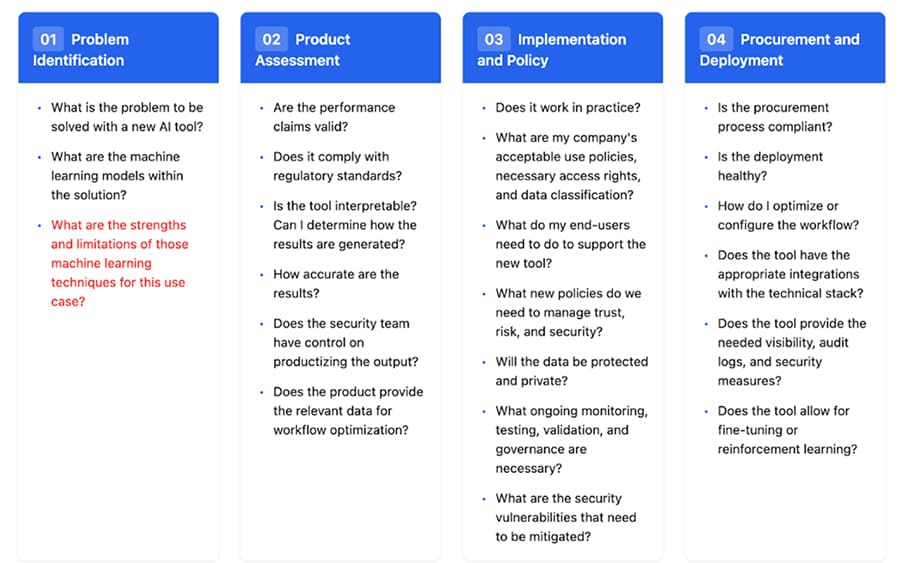

Figure 1 – A list of factors influencing enterprise budgeting to use AI for managing cybersecurity

Action Item #1 – Conduct an AI-Use Cost-Benefit Analysis for Budget Planning

Managers (representing C-Suites and the enterprise board) should do a rigorous cost-benefit analysis of the AI systems being operative within the enterprise to boost APT cyber risk management (CRM) of its critical infrastructure. It will usually be the case that such AI systems will need to be integrated with existing CRM frameworks, and this will incur hardware (e.g., AI-specific hardware such as GPUs), software (e.g., AI/ML training models), and firmware (e.g., those maintaining AI servers and aiding server resource and security management) costs for the enterprise.

More specifically, the enterprise needs to allocate a sufficient budget for building APT CRM AI systems and then maintaining such systems over time, keeping in mind regulatory and compliance constraints specifically appropriate for industry sectors (e.g., adhering to HIPAA and GDPR during AI/ML training and inference processes). A broad level of factors (adapted from a Darktrace case study in a fair-use fashion) affecting this budgeting analysis is shown in Figure 1. It is recommended that there should be a priority on the allocation of such budgets, but only post a systematic and judicious board and C-Suite brainstorming session on topics such as

(a) proper tradeoffs on building in-house AI CRM systems versus using third-party solutions,

(b) adopting Agile methodologies to improve cost management in building and maintaining AI CRM systems and

(c) the minimum ROI desired from AI-powered APT cyber risk management.

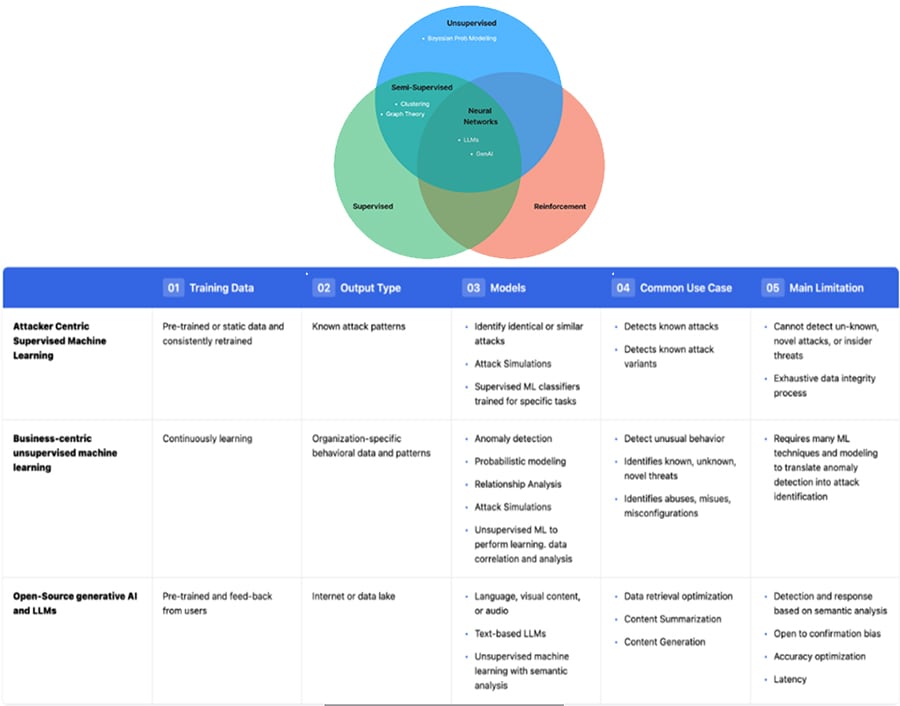

Figure 2 – A summary of AI/ML methods and their relevant cyber scenario-based applicability

Figure 2 – A summary of AI/ML methods and their relevant cyber scenario-based applicability

Also read: How systemic cyber risk management in software supply chains works with BOMs

Action Item #2 – Use an Adaptive Approach to Deploy the Right AI/ML tools

CI engineering managers should design and deploy a scenario-based adaptive AI approach to map the right AI/ML APT cyber risk management tools for the cyber threat environment. An improper non-adaptive mapping will maximise the managerial marginal cost of a unit of AI system performance gain. In the context of IT/OT systems, cybersecurity managers have access to small or large data about the APT cyber threat space to identify and protect CI systems from adversarial intrusions effectively. Different data availability scenarios need different AI/ML APT CRM solutions.

Ideally, the large/big data environments are suited to deploy (for cost-effective APT intrusion detection) Bayesian AI solutions, supervised learning tools, deep learning methods, transfer learning tools, reinforcement learning, and generative AI solutions, or a combination of these solution suites per the application. Given that expert/domain knowledge is extremely important in specific IT/OT sectors to accurately understand adversarial methods of CI resource compromise, it is best to develop Bayesian AI CI intrusion solutions for APT settings and complement these solutions with strengths from the other abovementioned tools. This recommendation is ‘attested’ by a US critical infrastructure research laboratory in discussion with MIT CAMS researchers.

For example, domain expertise can draw a cyber-attack graph on how an APT can sequentially, in a stepwise fashion (aligned with the MITRE ATT&CK framework), compromise each step of the CKC. The individual components of this attack graph can then be populated with Bayesian statistical parameters adaptively learned via powerful supervised/deep learning and/or generative AI tools that can eventually result in accurate APT detection alarms on relevant steps of the CKC. The common requirement in all these solution suites is the need of big data on network traffic features for optimising AI-powered APT detection performance. A CI management must invest sufficiently in technology and its process management to always have access to such data needs for AI-powered APT CRM solutions.

The small data environments mostly arise in APT detection in OT-driven CI as

(a) they are historically far more recent than traditional IT systems to have a big cyber vulnerability database and

(b) the OT-driven management culturally does not have structured processes in place across the CI enterprise to collect and process sufficient OT systems and traffic data of interest to data-hungry AI/ML models.

Ideally, the small data environments are suited to deploy Bayesian AI solutions, unsupervised learning tools, meta-learning methodologies such as few-shot learning, transfer learning tools, or a combination of these solution suites per the application at hand. Like in big data CI environments, expert/domain knowledge is essential to understand adversarial methods of CI resource compromise aligned with the MITRE ATT&CK framework. Hence, like in large data environments, we recommend building attack graphs for CKCs to understand the steps APTs take to compromise systems and then build an adaptive data-driven AI atop complemented by other abovementioned non-Bayesian tools.

A summary of AI methods and their relevant scenario-based applicability is shown in Figure 2.

Action Item #3 – Gather Sufficient Training Data for Critical Components of AI/ML Processes

The right quality and quantity of AI/ML training data for AI-powered APT CRM solutions is imperative for the latter’s efficiency and accuracy. However, simultaneously, enterprises of varying sizes have budget constraints, cybersecurity cultural drawbacks, and inevitable (public) data availability constraints to guarantee the perennial availability of appropriate quality and quantity of training data for each AI/ML CRM process component within the enterprise.

To satisfy budget constraints and get the best value for training data, CISOs should interface with AI engineering managers to identify the most critical AI/ML components in each AI/ML CRM process and identify the key ones critical to the accuracy of APT detection probability outputs.

For example, while using Bayesian AI solutions complemented by domain expertise inputs, engineers and cyber risk managers should ensure that model parameters on the most important Bayesian attack graph nodes are robustly estimated with sufficient cyber vulnerability data. To this end, a network scientific approach should be deployed to discover the most critical nodes of such graphs. This should be followed by a rigorous sensitivity analysis to study the degree to which adversarial intervention (including insider adversaries) on these critical nodes (via misconfiguration of traffic data, feature labels, in the form of statistical noise that biases AI/ML model parameters) affects the accuracy of APT detection probabilities.

At MIT CAMS, researchers have observed the extremely sensitive nature of AI/ML model parameters for APT CRM in the presence of adversarial interventions via quantitative studies. The outcome of the study recommends CI managers obtain sufficient non-adversarial training data on critical components of AI/ML CRM processes that might offset model parameter biases caused by uncontrollable adversarial interventions.

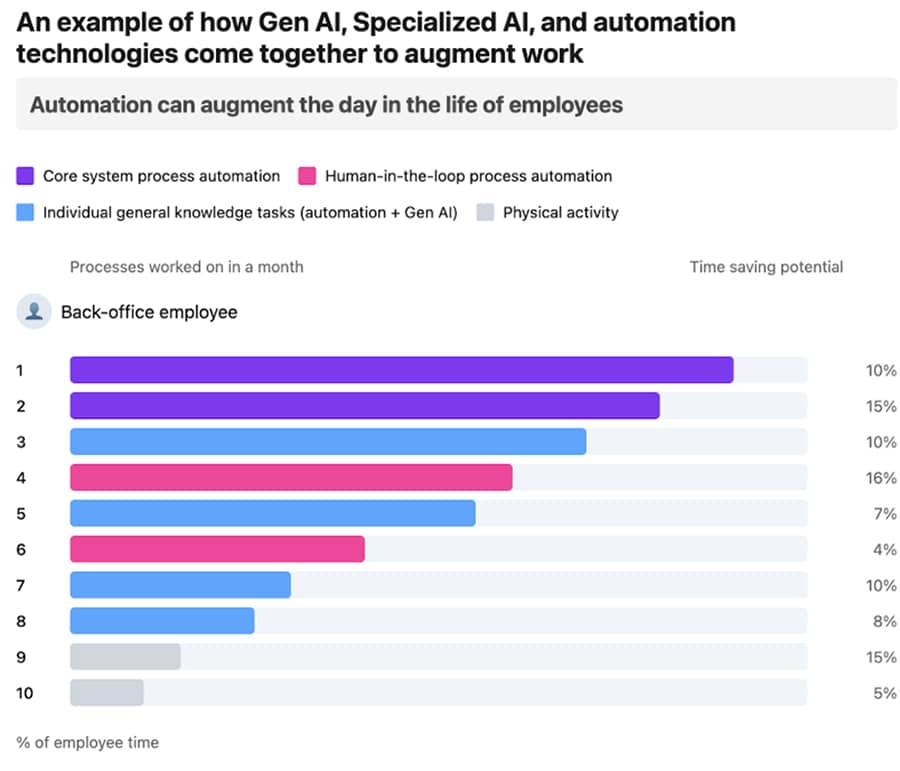

Figure 3: Statistics of technology-culture tradeoffs on AI use in an enterprise (MIT CAMS survey)

Figure 3: Statistics of technology-culture tradeoffs on AI use in an enterprise (MIT CAMS survey)

Action Item #4 – Managers Should Balance Culture-Technology Tradeoffs on AI

It is often the case that enterprise management fights technology with technology, i.e., they invest in more AI defence technology to counter/fight AI-powered cyber threats. This includes buying and/or developing AI-powered security tools and integrating them with their existing IT/OT infrastructure. While boosting tech solutions given budget constraints does not harm, developing a culture of understanding AI-powered solutions and operationalising AI with a good balance of culture-technology tradeoffs is more important. After all, if one needs to have a sustainable change and positive business impact of integrating AI and automation within an enterprise, promoting the right culture among office workers is the starting step. As ‘cultural’ examples of AI integrations cutting across people, process, and technology dimensions of an enterprise, the CISOs should

(i) promote a culture of defining software and data reference architectures for integrated AI and automation across the entire technology stack,

(ii) promote core (AI) system process automation,

(iii)human-in-the-loop process automation, and

(iv) individual employee general (AI) knowledge task automation.

Ranjan Pal (MIT Sloan School of Management, USA)

Yaphet Lemiesa [GU1] (MIT School of Engineering, USA)

Michael Siegel (MIT Sloan School of Management, USA)

Bodhibrata Nag (Indian Institute of Management Calcutta)

[This article has been published with permission from IIM Calcutta. www.iimcal.ac.in Views expressed are personal.]